% Scale the Z values of the points to the probability range GridCount = zeros(gridXBinSize,gridYBinSize) OccupancyGrid = zeros(gridXBinSize,gridYBinSize, 'like',ptCloudProcessed.Location) GridYBinSize = round(abs(spatialLimits(2,2) - spatialLimits(2,1)) / gridStep) īinIndices = pcbin(ptCloudProcessed,numBins,spatialLimits, 'BinOutput',false) GridXBinSize = round(abs(spatialLimits(1,2) - spatialLimits(1,1)) / gridStep) % The occupancy grid is created by scaling the points from 0m - 5m in % height to the probability values of % Set the occupancy grid size to 100 m with a resolution of 0.2 m Title( 'Point cloud before and after preprocessing') PcViewAxes = pcshowpair(ptCloud, ptCloudProcessed) % Visualize and compare the point cloud before and after preprocessing.įigure( 'Name', 'Processed Point Clouds', 'NumberTitle', 'off') PtCloudProcessed = pointCloud(locationPts) LocationPts(:,3) = locationPts(:,3) + sensorHeight SensorHeight = groundTruthPosesLidar(1).Translation(3) This information can be obtained from the lidar % point cloud % Since we use the Z-values to determine the occupancy in a grid image, % move the point cloud by sensor height so that more points are included to % calculate occupancy % Set the sensor height. PtCloudNonEgo = select(ptCloudClipped,~egoFixed) % Find the indices of the points other than the ego vehicle and create a % point cloud with these pointsĮgoIndices = findNeighborsInRadius(ptCloudClipped,sensorLocation,vehicleRadius) ĮgoFixed = false(ptCloudClipped.Count,1) % Segment and remove ego vehicle % Set location of the sensor and vehicle radius PtCloudClipped = select(ptCloudDenoised,indices) Indices = findPointsInROI(ptCloudDenoised,roi)

This is done to improve the processing performance % and also to include only the areas of interest % Set the limits to select the point cloud In summary, these are the steps used to calculate the vehicle odometry: This technique is also used in pcregistercorr. By successively composing these transformations, you transform each point cloud back to the reference frame of the first point cloud.

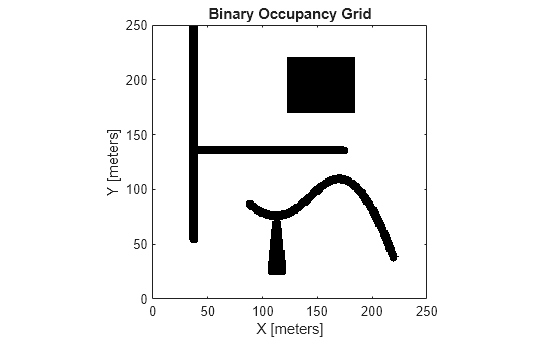

#Occupancy grid mapping matlab code registration#

Use a phase correlation registration algorithm to calculate the 2-D relative transformation between two images. Hence, convert the point clouds into 2-D occupancy grid images by projecting the points onto the ground plane. To build a 2-D occupancy grid map, 2-D pose estimations are sufficient. This approach of incrementally estimating the trajectory is called odometry. From these poses, the estimated trajectory of the vehicle is determined. In order to build a map using the collected point clouds, the relative poses between the successive point clouds need to be estimated. GroundTruthPosesLidar = helperGetLidarGroundTruth(simOut) Vehicle Odometry Using Phase Correlation Algorithm % Extract ground truth for the lidar data as an array of rigid3d objects PtCloudArr = helperGetPointClouds(simOut) This is a rough explanation omitting mathematical details.Error( "Unreal Engine Simulation is supported only on Microsoft" + char(174) + " Windows" + char(174) + ".")

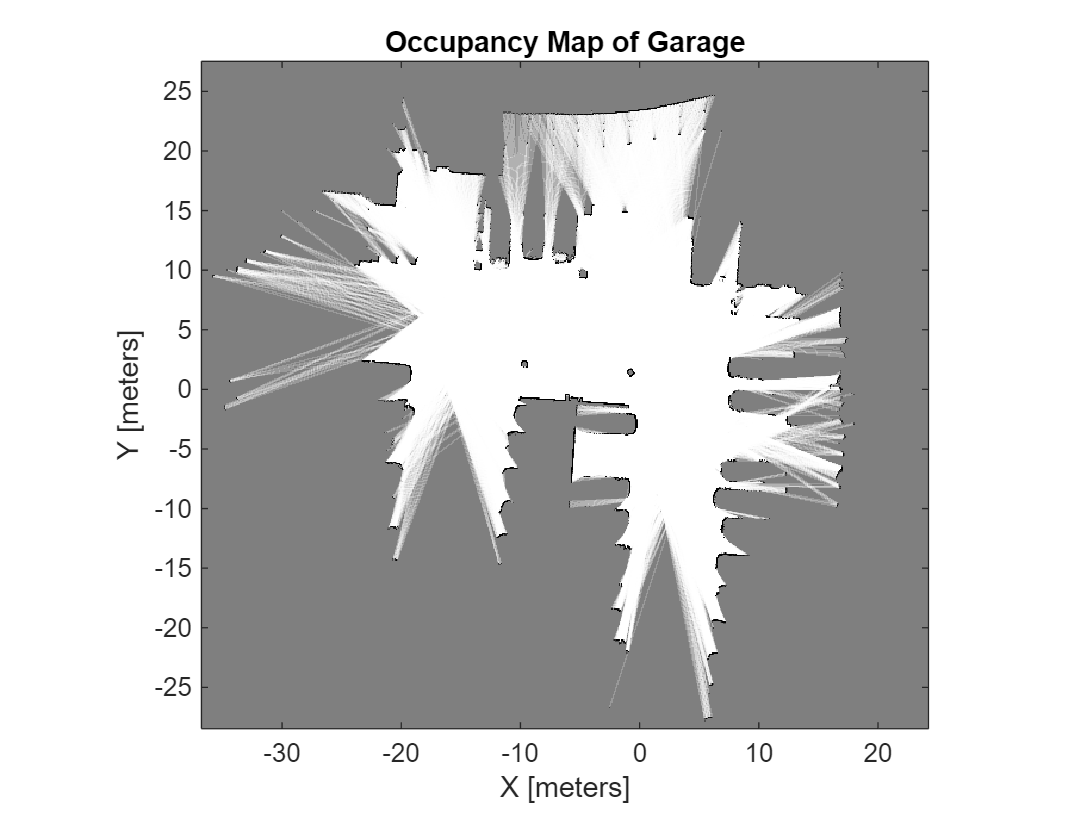

This is done by ray-casting the measurements on the grid and decreasing the associated cell's probability. As the robot moves around getting laser range measurements, it "sheds light" into the map and brightens the free-space areas. In the example above, the darker the grid the more uncertain the representation. The environment is discretised into (here even) cells and the grid values represent obstacle uncertainty. Occupancy grid mapping is a probabilistic representation of an environment. Another assumption is that the environment is static, thus it can get only more certain(brighter), and not more uncertain(darker) that in the initialisation. By relaxing this requirement, the problem gets transformed into a SLAM problem, which is beyond the scope of this example. This assumption should make sense, since we are addressing exclusively a mapping problem which requires good knowledge or the robots position to create an accurate map. Odometry pose data is treated as the real pose of the robot, in that sense there is no variance/uncertainty in the pose data, hence the robot belief distribution at every step is represented by a dirac centered at the robots pose. The script will run by using the measurement file laser_0.log which includes laser sensor data as well as odometry data.